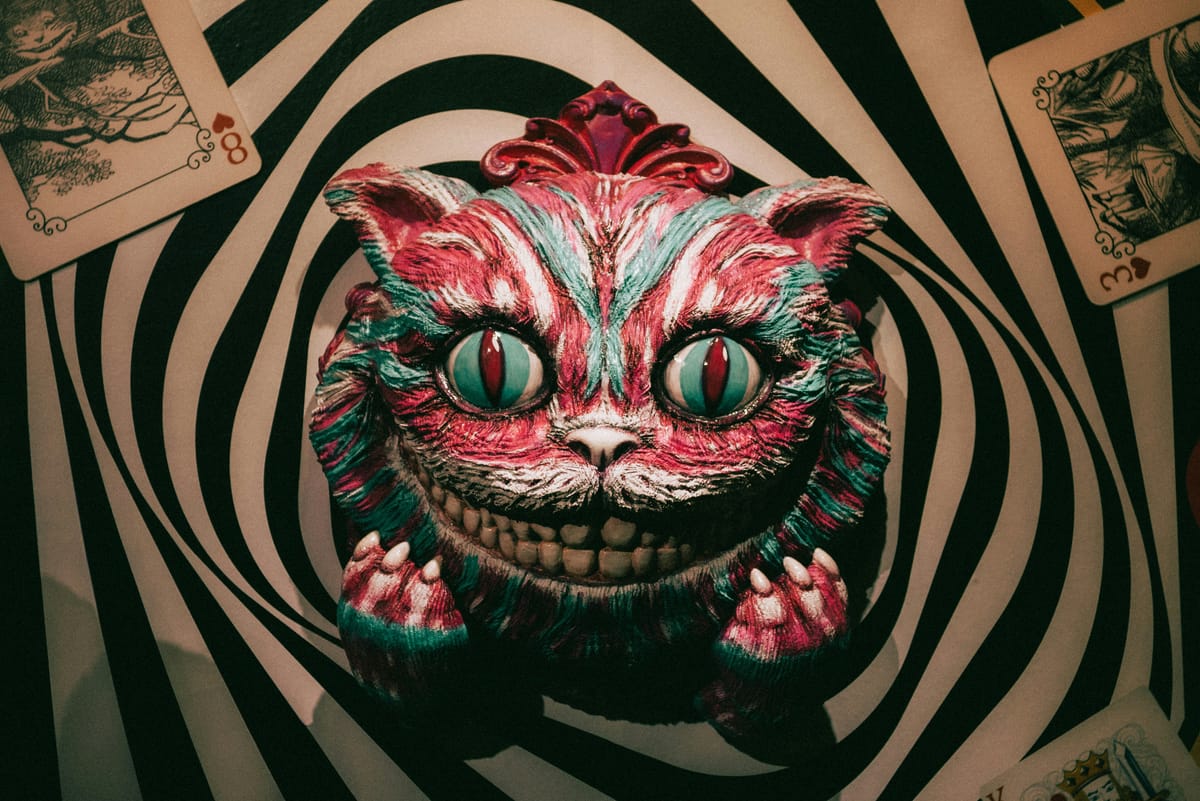

Chasing the AI High: Clay, Kilns, and the Red Queen's Race

Now, here, you see, it takes all the running you can do, to keep in the same place. If you want to get somewhere else, you must run at least twice as fast as that! - Red Queen, Through the Looking Glass, Lewis Carrol

Cue up The Times They Are a-Changin'.

The text predictor machines have begun predicting very valuable text. So valuable now that we think we don't need the inputters to the predictors. We just might have created the perpetual product machine of the future. Blessed be the generative transformers.

Chasing the AI High

At this point, I think a lot of us in the software industry have come across someone who finally got around to trying out an LLM coding agent to suddenly become obsessed with running multiple agents as close to 24/7 as possible. Some, we might even say, have succumbed to AI psychosis. They're convinced by the transformative nature of AI and that we will die out if we don't adopt it full force.

As time goes on though, it seems like no amount of productivity feels sufficient. Why work with one agent at a time? Let's have dozens! Hundreds, hell why not thousands of agents! Destroy the backlog! Create agents to fill the backlog! Let the customers fill the backlog! No more tickets, just prompts. Speed up! Faster! Faster!

Some people are more susceptible than others to become addicted to things. Designing and writing software was something that could be very challenging and tedious at times. So challenging and tedious that we created entire systems for how we would approach the process of writing said software. Waterfall, agile, scrum, Extreme Programming, all different ways just to coordinate how we could collectively build the things.

So no wonder that when something comes along that blows that all up and allows individuals to Ralph Loop the backlog into a state that you might be able to interact with it, you immediately start worrying that you've run out of things to build. Your productivity high is coming down and you don't want to crash. You need to keep this going, you need to put more things in the backlog.

The Red Queen's Race

The AI induced anxiety sets in and you're worried about your agents and your loops and whether they got stuck or you need to adjust them. Any time tokens aren't flying is time lost, productivity down. You're a 10x, nay 100x engineer now, so one hour lost is actually a thousand hours lost! It might be too late now though, as you're already Through the Looking Glass.

There certainly feels as though there are some interesting parallels in the themes of Through the Looking Glass (like how software engineers are Alice grieving the end of their childhood, thanks to AI), but I like to focus on the Red Queen's Race. This take was initially inspired by reading The Red Queen problem and the issues that arise in measuring scientific progress in the advent of AI.

Nicklas Lundblad and Dorothy Chou articulated in the article that some crude approaches to measuring of scientific progress could be looking at the number of patents obtained, papers published, or funding in a certain area. Each one of these falls wildly short for various reasons, but most of it has to do with muddied or misguided signals/incentives tied to them.

This crude measurement issue became more pertinent as my previous company got particularly interested in the number of PRs that software engineers were shipping every week. Many of us internally pointed to Goodhart's Law, which as you can imagine, didn't do shit because that would make leadership look like they were wrong for using a metric as a goal. I want to zoom out from this a little bit though, as Lundblad and Chou did regarding scientific process.

Basically all of us have access to frontier models. All of us should be capable of prompting the models or integrating with them in similarly sufficient ways (only the fool thinks they have some kind of prompting edge). It suddenly seems that because our competitors can use AI to build faster, we must use AI to build faster, but that also means that we must move a LOT faster just to stay competitive. Now we're running in the Red Queen's Race. But so what? We have the models to help us run faster.

The problem becomes complexity and entropy.

Everyone is grabbing AI and running the fastest race they can, not even thinking about running a marathon. Your codebase is growing faster than your team can comprehend it (even with AI). You want to believe that you can eventually declare bankruptcy and rebuild it all over again with the next smartest model.

Again, this is being in the throes of addiction. Believing that next hit will make things better, but you're just trying to avoid coming down.

Building With Clay but No Kiln

AI built software is clay. Incredibly responsive, fast to shape, you can model something recognizable in minutes. That's genuinely powerful and not something to dismiss, because clay is useful for figuring out what you want to build or how you want something to look. But clay that never goes through the kiln dissolves under pressure. It looks like the thing, it feels like progress, but it has no structural integrity.

In software, the kiln that fires your code is done through code review, testing, deployment, monitoring, and debating.

AI doesn't really push back when you're using it, in fact it actively validates what you're wanting to do. So when your only partner for building just does whatever you say, you're never firing what you build in the kiln. Everything stays moldable. Additionally, now your piece of clay has to work with someone else's piece of clay. Individual pieces might be fine in isolation, but unfired clay doesn't join well. It can't bear load at the seams.

This leads to the Galapagos effect, which is similar to the Red Queen Race, where each engineer in their own AI-assisted bubble, evolving solutions perfectly adapted to the context of their conversation with Claude or Cursor, completely alien to the engineer sitting ten feet away doing the same thing with different prompts. The code looks similar on the surface because the LLM gives it a consistent style, but the design decisions underneath are divergent in ways nobody discovers until integration time. It all gets homogenized and merged together in the end by AI to appear coherent. It's really just clay mashed together.

I spent an inordinate amount of time having to push back on teams' API designs in my time at my previous company. There were a lot of assumptions in them, or they didn't account for places where they have something bespoke when they should just follow an established pattern. Insisting that they try harder or look at it from a different angle drove them to create a better interface. Something that is easier to understand and work with, but will also stand up to being fired in the kiln (without cracking).

The unfortunate thing is that if everything you build is with unfired clay, what happens when it rains? Everything will wash away. You'll probably tell yourself, no big deal, we can just use AI to rebuild it when the rain stops. The problem is you can't tell which pieces were fired and which weren't by looking at them now. Nobody knows what's load-bearing and what's decorative, because the understanding that used to come from building it yourself, the knowledge of why it's shaped that way, not just what it does, was never created.

The Doom Loop and Getting Out of the Race

Here is the doom loop as it stands: the race creates pressure to cut teams and lean harder on AI, which removes the kilns (the human friction that fired the clay), which means more unfired code ships, which increases system fragility and complexity, which makes the race feel even more urgent. The loop becomes very hard to break. Organizations can see the friction they're removing. They can't see what that friction was doing.

Coordination rituals like Waterfall, agile, scrum, XP, were not just project management theater. They were how groups of humans built shared understanding of complex systems. The design reviews, the PR debates, the architecture arguments were the kilns. The pushback I gave on API designs wasn't slowing teams down, it was the process by which clay became ceramic. When you remove something whose purpose you never understood, you don't discover the cost until the rain comes.

The point isn't that AI is bad, the point is that removing every mechanism that gave software structural integrity in order to go faster with AI is a trap.

How does the Red Queen's Race end?

The race doesn't end by running faster; Alice never got anywhere. So if you're in it, the only question is whether you're reducing complexity as fast as you're creating it. If not, you're not racing. You're falling.